Is there anything astronomers can’t find out from a couple of pixels?

They’re still trying to find a computer capable of measuring the mass of your mom

Uncalled for but 10/10 execution

🔥

That’s like, their whole life, it sounds like.

Geologists stare at rock formations, astronomers stare at fuzzy pixels. In both cases they study crazy things from deep time, but in a way that would slowly drive me mad.

You can tell a lot from how a CMOS or CCD picks up a couple photons

“This image was generated anywhere between 3 and 3 million years ago by an AI”

Another arm in the arms race. The next gen of face generation will have this mastered.

Does it really work like that? I would say that they are not trying to fool any test, just getting harder to be detected. The goal being looking completely realistic.

This is one of the basic techniques to spot AI fakes:

- Correct number of body features (limbs, fingers, eyes…)

- Non-intersecting body features

- Surface continuity (skin, clothes, walls…)

- ➡️ Eye reflections

- Consistent illumination of features

- Consistent shadows

The “test” they’re trying to fool, is kind of the Turing test: whether humans can tell them apart.

It’s quite easy to trick people with untrained eyes… for one, they have no idea what “consistent illumination” and stuff means. And something being off doesn’t mean that an AI made that mistake because humans make mistakes, too – photographs don’t, but the general problem is not just about telling realistic stuff apart but also illustrations. You’re looking specifically for mistakes that AI is likely to make, but humans are practically never going to make. And yes humans get hands wrong all the time.

Here’s a good video about what to look for and what not.

Yes, my comment applied more to photorealistic AI images.

Illustrations are a different beast, where people have much more creative freedom… and that video is reasonably good at explaining that, but I find it falls short at some points:

- AI image generators don’t “consult” source images to generate an output. At training time, they extract patterns from the training set, which is never again used for generation, only the extracted patterns are.

- Modern AI generators are increasingly good at generating text. They still struggle a bit, but compared to a year ago, they can now generate headlines and large text correctly, while the mess gets shoved into smaller and less important text. This isn’t all that different from human artists adding “filler gibberish” text.

- Layers. While a naive (and cheaper) approach to AI generation doesn’t use layers, there are generators which do use layers, and can keep object consistency across obscured or cut-off sections.

As AI generators advance, all these differences are likely to disappear… by following this same criticisms to fix things.

AI image generators don’t “consult” source images to generate an output.

Well, you have an artist breaking things down for an audience understanding neither the technical nor artistic aspect…

Modern AI generators are increasingly good at generating text. They still struggle a bit

I mean… SDXL still struggles a lot. The only thing you can get it to spell reliably is probably “Hooters”. There’s the one or other lora which makes it not suck completely but it’s still nowhere near actually good at generating text, the training just isn’t there. And even with that in place things like signatures are probably going to be gibberish.

While a naive (and cheaper) approach to AI generation doesn’t use layers, there are generators which do use layers,

Unless you start off training by feeding the model 3d data (say, voxels) alongside 2d projections I don’t think it’s ever going to develop a proper understanding of these kinds of things. Or, differently put: Learning object permanence (of sorts, related) is a meta-cognitive abstraction step that just won’t happen with the type of topologies we know how to engineer. It’s probably like 90% on the way towards AGI, so to get a simple topology to understand it we have to spoon-feed it permanence information alongside the (apparent) non-permanence.

Consistent illumination and shadows is a rabbit hole we really don’t want to hop into.

Outside of very obvious anomalies even a trained eye will have a hard time discerning what’s going.

Some are very easy to spot, like a shadow of a character, that’s missing a limb on the shadow, or has different placement or pose. Illumination or parallel surfaces where they vary in shadowing without a reason, is also a dead giveaway. But the mist damning evidence is having one scene, then a slightly different scene in a reflection.

There are reasons for human authors to do any of these on purpose, but unless that purpose is part of the work, they’re most likely AI mistakes.

Of course it’s kind of funny how there is already a large overlap between the best AI art, and the most senseless “modern art”.

Looking completely realistic and being able to discern between real and fake are competing goals. If you can discern the difference, then it does not look completely realistic.

I think what they’re alluding to is generative adversarial networks https://en.m.wikipedia.org/wiki/Generative_adversarial_network where creating a better discriminator that can detect a good image from bad is how you get a better image.

If there’s an algorithm for detecting deep fakes, there’s an algorithm for creating an AI capable of fooling that algorithm.

And then someone will create a new AI capable of defeating the old one. It’s just AIs all the way.

And they’ll train that AI on the first AI’s detection performance. This process is called Generative Adversarial Neural Networks, or GANN. It works really well and allows AI to become superhuman by having superhuman obstacles to overcome.

this should work on its face because many machine learning algorithms optimize for low Gini coefficient, e.g. a decision tree classifier makes binary splits based off the greatest reduction in Gini; astronomers use something similar to compress the data sent back from space telescope cameras to a reasonable filesize, so if a picture of a face has weird Gini coefficients then it makes sense that it would’ve been AI generated

To quote the article.

While the eye-reflection technique offers a potential path for detecting AI-generated images, the method might not work if AI models evolve to incorporate physically accurate eye reflections, perhaps applied as a subsequent step after image generation.

I’m not discouraging AI detection, we will absolutely need it in the future, but we have to acknowledge that AI detection is a cat and mouse game.

oh absolutely, it’s specifically a generative adversarial network!

using galaxy-measurement tools

What if they use an iPhone?

🤖 I’m a bot that provides automatic summaries for articles:

Click here to see the summary

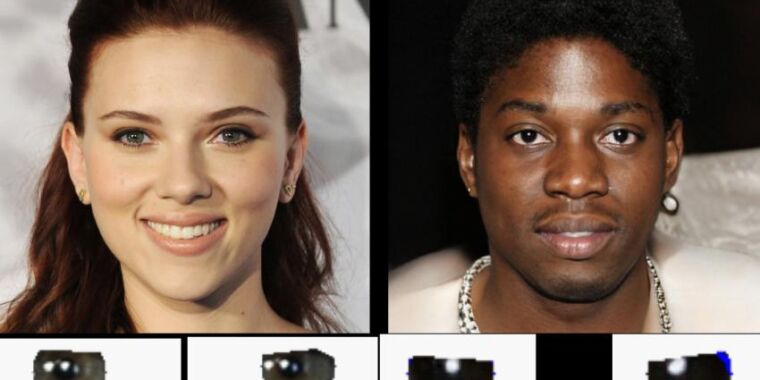

Researchers at the University of Hull recently unveiled a novel method for detecting AI-generated deepfake images by analyzing reflections in human eyes.

Adejumoke Owolabi, an MSc student at the University of Hull, headed the research under the guidance of Dr. Kevin Pimbblet, professor of astrophysics.

In some ways, the astronomy angle isn’t always necessary for this kind of deepfake detection because a quick glance at a pair of eyes in a photo can reveal reflection inconsistencies, which is something artists who paint portraits have to keep in mind.

They used the Gini coefficient, typically employed to measure light distribution in galaxy images, to assess the uniformity of reflections across eye pixels.

The approach also risks producing false positives, as even authentic photos can sometimes exhibit inconsistent eye reflections due to varied lighting conditions or post-processing techniques.

But analyzing eye reflections may still be a useful tool in a larger deepfake detection toolset that also considers other factors such as hair texture, anatomy, skin details, and background consistency.

Saved 70% of original text.